A lightweight script that strips out Microsoft’s new AI-driven features from Windows 11 has erupted across GitHub adn social platforms, driven by a rising privacy backlash among power users and consumer advocates. The tool – shared in code snippets and packaged downloads – promises to disable telemetry hooks, built-in AI assistants and related background services, striking a chord with users who say the features collect too much data or bloat the operating system. its rapid spread has intensified a broader debate about the trade-offs between integrated AI functionality and user control, drawing praise from privacy-minded communities and warnings from some experts about potential stability and update risks. As adoption climbs and headlines multiply, attention is shifting to how Microsoft will respond and what this means for the future of AI integration in mainstream software.

Viral Script That Removes AI Features from Windows Eleven Triggers global Privacy Backlash

As a wave of users embraced a viral script that disables built-in AI telemetry in Windows 11 amid a global privacy backlash, the cryptocurrency sector saw immediate reverberations: debates over decentralization, data sovereignty, and the limits of on‑chain privacy moved from forums into mainstream tech coverage. Bitcoin’s design - a public, append‑only blockchain secured by proof‑of‑work consensus – offers strong censorship resistance but not anonymity; the UTXO model and clear ledger enable address clustering and deanonymization by chain‑analysis firms. By contrast, privacy‑focused protocols and tools (such as, CoinJoin, Monero and off‑chain channels such as the lightning Network) aim to restore transactional confidentiality, a principle that many Windows users cited when they ran the script. Importantly, historical examples underscore trade‑offs: sanctioned mixers (notably the 2022 action against Tornado Cash) demonstrate how regulatory pressure can limit certain privacy tools, while Bitcoin’s fourth halving in April 2024 – which cut the block subsidy by 50% every ~210,000 blocks – shows how protocol events alter miner economics and, indirectly, network resilience. Taken together, these dynamics make clear that privacy preferences are reshaping demand for both on‑chain and off‑chain solutions, and that technical literacy about UTXO management, address reuse and mempool behavior is now a practical necessity for users who expect confidentiality.

- For newcomers: use a hardware wallet and preserve your seed phrase offline; favor non‑custodial wallets when privacy is a priority and avoid address reuse to limit linkage.

- For experienced users: run a full node to validate your own view of the chain, employ coin‑control and multisig, and evaluate CoinJoin or privacy‑preserving layer‑2 routing while weighing legal risk.

- Market & regulatory watch: monitor AML/KYC frameworks such as the EU’s MiCA regime and jurisdictional enforcement trends that can affect liquidity for privacy‑enhancing assets.

Moving from privacy to markets, the immediate impact on crypto flows was nuanced rather than binary: heightened privacy concerns tend to increase interest in privacy‑centric instruments and self‑custody solutions, yet regulatory crackdowns and exchange delistings can compress liquidity and raise spreads.for example, when network congestion drives up fees – a pattern seen during major activity spikes in 2021 where fees rose by an order of magnitude – small privacy transactions become uneconomical, pushing users toward layer‑2s like Lightning or batching strategies. Moreover, institutional capital responds to regulatory clarity: clearer rules can expand on‑ramps and custody offerings, while uncertain enforcement tends to favor decentralized venues and peer‑to‑peer markets. Consequently, practical steps for market participants include hedging operational risk, maintaining a mix of on‑chain and off‑chain liquidity, and using analytics to stress‑test privacy measures against address clustering techniques. in short, the windows privacy backlash and its viral script have illustrated a broader point for the crypto ecosystem: technical tools can mitigate surveillance, but adoption, cost, and regulation jointly determine whether those tools deliver real‑world privacy without introducing systemic market or legal risks.

Inside the Code Technical Breakdown Shows Registry Tweaks Service Disables and Risk of System Instability

Security researchers who dissected the viral script that promised to “nuke” AI features in Windows 11 found a pattern that is directly relevant to cryptocurrency operators: registry tweaks and service disables can produce subtle, cascading failures when applied to systems that host Bitcoin software, wallets, or full nodes.at the technical level, turning off or corrupting OS services such as time synchronization (NTP), networking stacks, USB drivers, or background update processes can break assumptions that consensus and wallet software rely on. For example, Bitcoin nodes use Median Time Past (MTP) to validate block timestamps and will reject blocks that appear more than two hours in the future; disabling time services or manipulating system clocks can therefore cause a node to fall out of sync, drop peers, or mis-validate transactions. Likewise,disabling USB or driver services can prevent hardware wallets (such as Ledger or Trezor) from connecting,exposing users to the concrete risk of being unable to sign or recover funds in an emergency. In the current market context - where privacy backlash has driven many users to run ad‑hoc scripts from social platforms – these operational errors are not hypothetical: they are an active attack surface that can lead to lost keys, forked local views of the chain, and system instability at exactly the moments when reliable access to crypto infrastructure matters most.

consequently, both newcomers and experienced operators should treat any script or binary that changes OS-level configuration as high-risk and follow measurable safeguards to protect funds and node integrity.Practical steps include verifying software signatures and checksums, sandboxing changes in a virtual machine or using a testnet node first, and keeping redundant backups of wallet files and seed phrases offline. For operators running full nodes or validator infrastructure, maintain at least the default 8 outgoing peers and monitor block height divergence and mempool behavior; sudden drops in peer count or inability to reach the network indicate service-level interference. Additionally, consider these recommended practices:

- Use hardware wallets or multisig setups to reduce single‑point key risk.

- Verify releases using GPG signatures from official repositories before installation.

- Test changes on staging/testnet environments and maintain immutable backups in at least two separate locations.

- Monitor system services, RPC responsiveness, and time sync to detect tampering early.

Taken together, these steps help mitigate the operational risk introduced by registry-level tweaks and viral scripts – preserving both the technical integrity of nodes and the financial security of wallets amid evolving market adoption and heightened regulatory and privacy scrutiny.

Legal and Security Experts Warn of Liability Malware exposure and Corporate Policy Violations

Legal and security advisers are increasingly warning that the recent surge in viral tooling – notably the Script to Nuke AI Features from Windows 11 Goes Viral Amid Privacy Backlash phenomenon – has created a fertile vector for credential-stealing malware that can transform a privacy-driven response into catastrophic financial loss. Security teams note that end users who run unvetted scripts on corporate endpoints risk introducing remote access trojans (RATs), clipboard hijackers, and other implant-based malware that target private keys and seed phrases; these components are the single points of failure in any self-custody arrangement. Because many enterprises maintain strict bring-your-own-device (BYOD) and software-execution policies, an employee executing a public script on a work machine can trigger policy violations, regulatory reporting obligations, and potential civil liability if corporate funds or client assets are exposed. Furthermore, attackers have repeatedly used simple techniques - for instance, replacing a copied Bitcoin address in the clipboard seconds before a user pastes it into a wallet - to divert funds, underscoring how endpoint compromise converts operational privacy concerns into balance-sheet risk for both individuals and firms.

Accordingly,practitioners recommend a layered technical and governance response that balances accessibility with rigor; in practice this means combining core cryptographic hygiene with enterprise controls and user education. For newcomers and institutional users alike, immediate steps include:

- Hardware wallets and air-gapped signing to keep private keys off internet-connected devices;

- Multisignature (multisig) architectures or HSMs for high-value treasury management to reduce single-point-of-failure risk;

- Endpoint hardening, strict execution whitelists, and mandatory code review for any script distributed internally (avoid running viral scripts on corporate devices);

- Incident playbooks that include rapid chain-analysis, exchange freeze requests, and legal notification procedures to limit exposure and meet AML/KYC and data-breach obligations.

For experienced operators, advanced measures such as PSBT workflows, Shamir Secret Sharing for seed distribution, firmware attestation, and on-chain monitoring with alerts tied to anomalous UTXO movements provide additional defense-in-depth.Market context matters: as institutional interest in Bitcoin and other crypto assets grows – with adoption trends showing more corporate treasuries and ETFs exposing enterprises to on-chain risk – combining cryptographic best practices with clear corporate policies is the most effective way to mitigate both legal liability and the operational security threats amplified by viral,user-run scripts.

How Users Can protect Themselves Backup Before Changing Settings Verify Script Source Use Official Privacy Controls and consult IT

Security reports and incident analyses make clear that routine device changes and unverified scripts are a primary attack vector for cryptocurrency theft, because attackers target endpoints rather than breaking cryptography. In practice, that means before you alter wallet settings, install software, or run community-sourced utilities you should create a tested, encrypted backup of any BIP39 seed or keystore and verify the integrity of signing material. For newcomers this means: use a reputable hardware wallet (for example, devices that require on-device confirmation of addresses), write down the mnemonic on paper or metal, and store copies in at least two geographically separated, fire-resistant locations. For experienced users and institutions, implement multisig (e.g., 2-of-3) or threshold-key schemes for high-value holdings, maintain offline cold storage with periodic test restores, and use partially signed Bitcoin transactions (PSBTs) or air-gapped signing workflows. Furthermore, users should verify any script or tool before execution by checking vendor statements, GitHub commit histories, PGP signatures, and SHA-256 checksums, and by running unfamiliar code in an isolated VM; a recent wave of viral PowerShell utilities like the “Script to Nuke AI Features from Windows 11” highlights how privacy backlash can drive rapid sharing of unsigned scripts that may exfiltrate clipboard data, browser extension keys, or private keys – a common method behind endpoint compromises that security vendors often attribute to a significant majority (frequently cited above 66%) of reported wallet breaches.

Looking ahead, those precautions matter both for individual investors and for firms navigating heightened regulatory scrutiny and growing institutional adoption of custody services. Consequently, apply official OS and application privacy controls (disable unnecessary telemetry, restrict clipboard access, and use sandboxing browser profiles), and consult IT or a security operations team when in doubt; enterprises should require code signing and centralized approval workflows before permitting scripts on workstations. In addition,consider these practical steps to reduce operational risk:

- Use hardware verification: always confirm recipient addresses on-device rather than trusting a copy-paste operation.

- Isolate high-value operations: perform signing on an air-gapped machine or via a dedicated signing device.

- Validate provenance: prefer official releases,signed binaries,and well-audited open-source projects for wallet software and DeFi tooling.

Transitioning from practice to policy, market dynamics – including growing DeFi activity and MEV pressure on Ethereum and other smart-contract platforms - mean that user error and compromised endpoints can have outsized financial consequences; therefore, balance innovation with disciplined operational security, document recovery procedures, and update incident response plans to include key-rotation, compromise detection, and legal/financial notification protocols. These measures give both new entrants and seasoned practitioners concrete, defensible steps to protect private keys, preserve on-chain assets, and respond faster when threats emerge.

Q&A

Summary

A community-created script that claims to remove or disable Windows 11’s built‑in ”AI” features has circulated widely online, sparking debate about privacy, user control and system safety. The following Q&A answers common reader questions about the script, the privacy concerns driving its popularity, technical and legal risks, and safer alternatives.

Q: What is this script and why is it getting attention?

A: The script is a user-written utility that automates removal or disabling of components of Windows 11 that are commonly associated with Microsoft’s recent AI integrations – such as apps, services, telemetry settings or cloud-dependent features. It has gone viral because many users concerned about data collection and cloud‑based processing see it as a one‑click way to regain control of their machines.

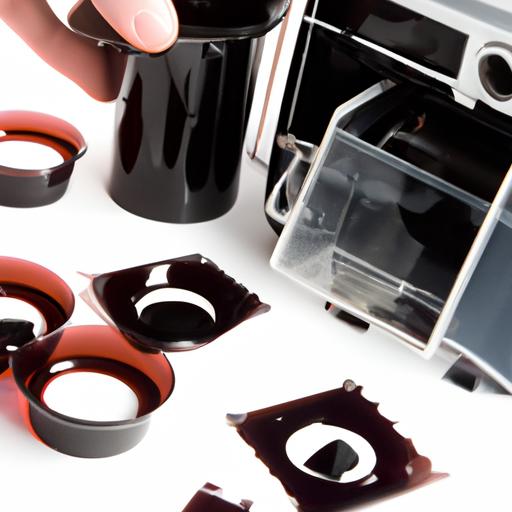

Q: Which Windows components does the script target?

A: Variants of such scripts typically target a mix of optional apps, background services, scheduled tasks, telemetry/diagnostics settings, and online‑connected components. Specific targets vary by script version; some aim only to change privacy settings, others remove installed packages or editing the registry. Because there is no single standard script, the exact components removed or disabled differ.

Q: Who made the script and is it trustworthy?

A: Viral scripts are often produced and shared by anonymous or pseudonymous developers on forums and social platforms.Trustworthiness depends on the author and whether the code has been peer‑reviewed. Users should assume risk unless the script is open source, widely audited, and vouched for by credible security researchers.

Q: What are the privacy concerns motivating people to use it?

A: The concerns include: telemetry and diagnostic data Microsoft may collect; local apps or features that send data to cloud services for AI processing; and unclear or evolving data‑use policies around AI features. For some users, the perception that Windows is becoming more cloud‑centric and data‑hungry is driving demand for tools that remove such functionality.Q: Is running the script risky?

A: Yes. Risks include:

– Breaking system functionality (removing components windows expects).

- Losing support or warranty remedies for modified systems.

- Causing update failures or leaving the OS in an unstable state.

– Introducing security vulnerabilities if critical services are disabled.

– Executing malicious code if the script is tampered with.

Always assume nontrivial risk unless you can inspect and test the script in a safe habitat.

Q: Could the script “brick” my PC or cause data loss?

A: Possibly. Scripts that remove drivers, system apps, or modify registry keys can render Windows unbootable or break features. Back up important data before making broad system changes and avoid running unknown scripts on production machines.

Q: Are there legal or terms‑of‑service issues with using such scripts?

A: Generally, altering your own software is legal in most jurisdictions, but modifying licensed software can affect support agreements and terms of service. In enterprise environments, running unsanctioned scripts can breach company policies. Distributing a script that incorporates copyrighted or proprietary code without permission could raise legal concerns.

Q: What should users do if they’re worried about privacy but don’t want to run risky scripts?

A: Safer alternatives include:

– Review and change Windows privacy settings (Diagnostics & feedback, Speech, Activity history).

– Use a local account rather than a Microsoft account for a less cloud‑integrated setup.

– Disable cloud features individually (OneDrive,Cortana,etc.) through official settings.

– Use Group Policy or MDM controls for granular, supported configuration (especially in business setups).

- Keep Windows updated and use reputable privacy‑focused utilities recommended by trusted security professionals.

Q: How can I evaluate whether a removal script is safe to run?

A: Steps to reduce risk:

– Only consider open source scripts with readable code and an audit trail.

– Read the code line‑by‑line or have a trusted expert do so.

– Test in a virtual machine or on a noncritical test device first.

– Use system backups or create a full disk image to allow full recovery.- Check community discussion, independent security audits, and reputable tech coverage.

Q: What has Microsoft said about third‑party scripts that remove features?

A: Publicly available guidance from microsoft encourages users to use built‑in settings, Group Policy and enterprise management tools to configure Windows. Companies typically warn against unsupported modifications as they can compromise security or updatability. For specific statements, refer to Microsoft’s official support and privacy documentation.

Q: Will removing AI features stop Microsoft or other services from collecting data?

A: Not entirely. Some data collection is tied to core OS services and telemetry that may not be removable without breaking the system. Disabling optional features and limiting cloud integrations reduces exposure, but it’s difficult to guarantee zero data sharing without replacing the platform or using hardened configurations.

Q: Could this trend affect Microsoft’s product strategy or policy?

A: Widespread user backlash can influence corporate decisions and regulatory scrutiny.If many users voice privacy concerns – and especially if enterprise customers demand change – Microsoft may face greater pressure to offer clearer controls, better opt‑outs, or more local‑only AI options. Regulators may also examine data practices tied to AI features.

Q: What should nontechnical users do now?

A: Practical steps:

– Read Microsoft’s privacy dashboard and adjust settings.

– Use a local account if you want to minimize cloud sync.

– turn off optional services (OneDrive sync, speech/typing personalization).

– Back up data regularly.

– Consult reputable tech outlets or a trusted technician before running third‑party scripts.

Q: Where can readers find reliable information or assistance?

A: look to official Microsoft support pages, major technology news outlets, respected security researchers, and community resources with transparent authorship. For enterprise environments, consult your IT department or an accredited vendor.

Bottom line

The viral script reflects real anxieties about AI, cloud processing and telemetry in consumer operating systems. While some users value tools that restore control, running unverified, system‑level scripts carries technical and security risks. Safer approaches include using built‑in privacy controls, testing changes in isolated environments and relying on vetted tools or expert advice before making irreversible modifications.

To Wrap It Up

As the script continues to circulate online, the episode underscores a widening unease about how quickly consumer operating systems are incorporating always-on AI capabilities – and what that means for personal data and device control. whether users opt for community-built remedies or wait for vendor updates, the controversy is likely to keep regulators, privacy advocates and corporate security teams engaged.The debate also echoes broader industry moves to give users more control over their devices – from encrypted location-sharing and crowdsourced find networks on mobile platforms to built-in lock-and-erase options for lost hardware – highlighting that the balance between innovation and privacy will remain a central battleground in the months ahead. Expect further scrutiny, patching and public discussion as both companies and users grapple with the trade-offs of AI on everyday devices.